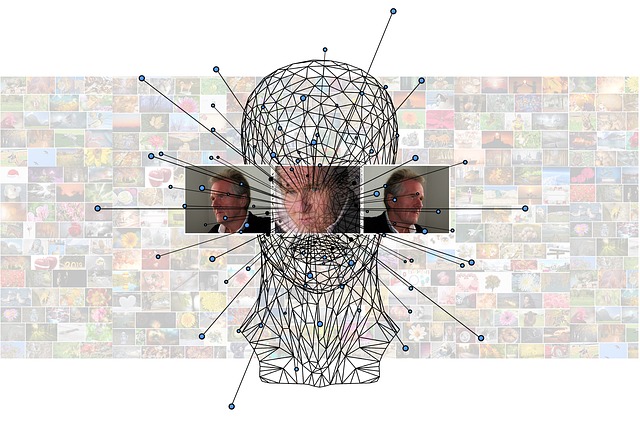

Freshly published: Single-Layer Vision Transformers for More Accurate Early Exits with Less Overhead

We are proud to mention that the team from Aarhus University (AU) just published paper focusing on deep learning and the deployment of such models in time-critical applications with limited computational resources, for instance in edge computing systems and IoT networks.

The article is trending in the academic research lately, so make sure to read it if you are interested in the topic. It is also super relevant to MARVEL given the research we are conducting in Machine Learnig and Deep Learning for utilizing extreme scale audio visual data in the context of a smart city.

Abstract of the paper

Deploying deep learning models in time-critical applications with limited computational resources, for instance in edge computing systems and IoT networks, is a challenging task that often relies on dynamic inference methods such as early exiting. In this paper, we introduce a novel architecture for early exiting based on the vision transformer architecture, as well as a fine-tuning strategy that significantly increase the accuracy of early exit branches compared to conventional approaches while introducing less overhead. Through extensive experiments on image and audio classification as well as audiovisual crowd counting, we show that our method works for both classification and regression problems, and in both single- and multi-modal settings. Additionally, we introduce a novel method for integrating audio and visual modalities within early exits in audiovisual data analysis, that can lead to a more fine-grained dynamic inference. You may read the full paper here.

For more direct updates from the MARVEL team follow us on LinkedIn and on Twitter. For regular updates including a collection of news and relevant information sign up on our Newsletter below.

Newsletter

Subscribe

Menu

- Home

- About

- Experimentation

- Knowledge Hub

- ContactResults

- News & Events

- Contact

Funding

This project has received funding from the European Union’s Horizon 2020 Research and Innovation program under grant agreement No 957337. The website reflects only the view of the author(s) and the Commission is not responsible for any use that may be made of the information it contains.