Low-complexity acoustic scene classification in DCASE2022 Challenge

Introduction

The annual DCASE (Detection and Classification of Acoustic Scenes and Events) Challenge has a substantial role in steering research interest in the audio content analysis of everyday environments. Acoustic scene classification has been one of the main topics in the challenge throughout its history. The goal of acoustic scene classification (ASC) is to classify a test recording into one of the ten predefined acoustic scene classes (e.g., street, park, and tram).

Task description

Under the DCASE2022 Challenge, TAU and FBK organized a task focusing on the research question about the ASC system’s generalization properties across several different audio-capturing devices while directing the solutions toward low-complexity systems. The focus of the task was pushed towards real-world applicability in low-end IoT devices by introducing complexity requirements roughly modeled after ARM-based devices (Cortex-M4). The system complexity was limited in terms of available memory and computational resources. The memory limitation was addressed by setting the maximum number of model parameters in the neural network (128K) and fixing the numerical representation to INT8, and computational resource limitations were addressed by setting the maximum number of multiply-and-accumulate operations permitted at the inference time (30 MMACs). The actual classification was set to be done independently for provided one-second audio segments. For the system development, an open dataset totaling 64 hours of audio material recorded in multiple European cities with multiple recording devices was provided, and for the system evaluation, 22 hours of audio material was used. The system’s performance on the evaluation dataset was measured with multi-class cross-entropy (log loss) and classification accuracy (macro-averaged) metrics.

Results

The task received 48 submissions from 19 international teams. Most of the submissions adapted state-of-the-art convolutional networks to meet the computational requirements. All systems used log-mel energies or mel spectrograms as acoustic feature front-end, and data augmentations techniques were widely used. The most popular neural network architectures among the submissions were CNN-based, and these architectures often used computationally efficient depth-wise separable convolutions.

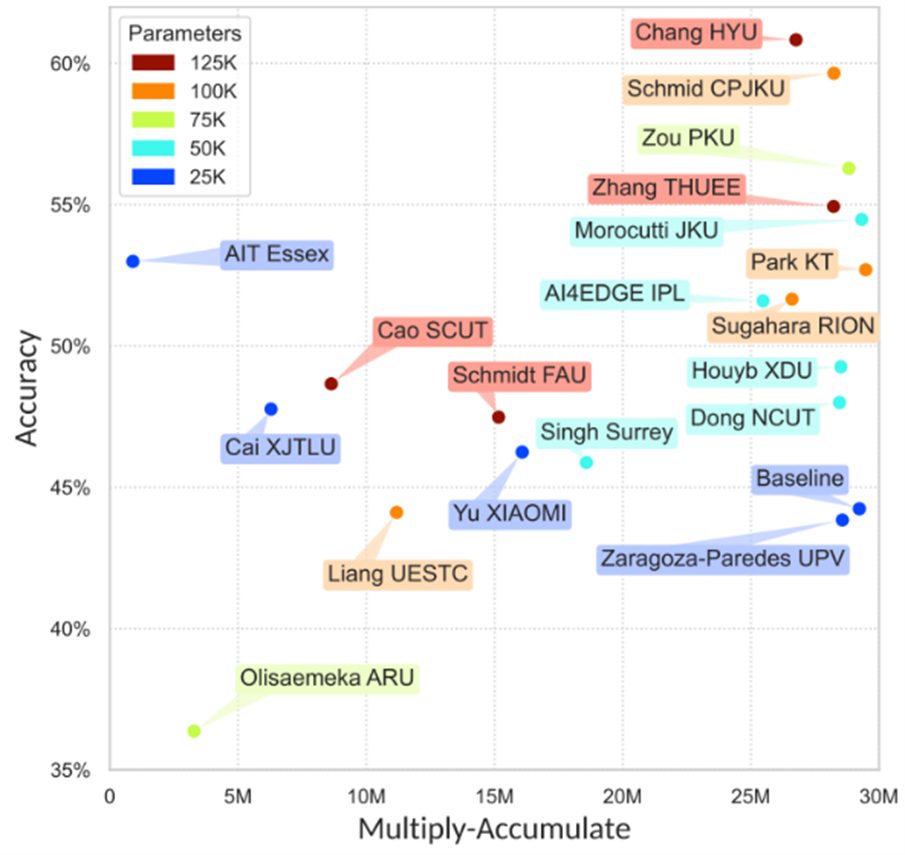

A performance versus computational cost of the submitted systems is shown in Figure 2. The best submission by Schmid et al. [1] (source code) had a log loss of 1.091 and an accuracy of 59.6% overtaking the baseline system (1.532 and 44.2%). This submission used a teacher-student approach where a classifier based on state-of-the-art pre-trained audio embedding (PaSST) was used as a teacher for a smaller student model based on RF-regularized CNN. The recording device generalization was enhanced during the system training by using the MixStyle data augmentation technique to mix frequency-wise statistics.

Low-complexity requirements were tackled in the submissions mainly by adapting existing state-of-the-art audio classification approaches (e.g., MobileNets or BC-ResNets) to meet the computational requirements. Another approach was to utilize very involved acoustic feature extraction solutions together with simplified neural network architectures. Generally, a lot of focus in the development was put on the training process and data augmentation strategies. Often used strategies were quantization-aware training and knowledge distillation with bigger pre-trained networks that were fine-tuned on the current task. Top performing systems in the challenge are using neural architectures with large receptive fields, transformer architectures, and coordinate attention to optimizing their performance.

All results on the DCASE Challenge task are available in DCASE community website, and a detailed analysis of the results and submitted system can be found in the publication [2].

Bibliography

[1] F. Schmid, S. Masoudian, K. Koutini and G. Widmer, “Knowledge Distillation from Transformers for Low-Complexity Acoustic Scene Classification,” in Proceedings of the 7th Detection and Classification of Acoustic Scenes and Events 2022 Workshop (DCASE2022), 2022.PDF

[2] I. Martín-Morató, F. Paissan, A. Ancilotto, T. Heittola, A. Mesaros, E. Farella, A. Brutti and T. Virtanen, “Low-Complexity Acoustic Scene Classification in DCASE 2022 Challenge,” in Proceedings of the 7th Detection and Classification of Acoustic Scenes and Events 2022 Workshop (DCASE2022), 2022.PDF

Blog signed by: Toni Heittola. of the TAU team

Menu

- Home

- About

- Experimentation

- Knowledge Hub

- ContactResults

- News & Events

- Contact

Funding

This project has received funding from the European Union’s Horizon 2020 Research and Innovation program under grant agreement No 957337. The website reflects only the view of the author(s) and the Commission is not responsible for any use that may be made of the information it contains.