Smart events: Observation of crowd behavior in the city of Novi Sad

Novi Sad is the 2nd largest city in Serbia and the capital city of Vojvodina province. It is located on the banks of the Danube river and it represents one of the main scientific and cultural centers in the region for decades, with more than 300,000 inhabitants. Novi Sad has been named by the European Union: European Capital of Culture for 2022. The city is also home of EXIT – one of the major European music festivals, founded in 2000 in the University Park as a student movement. EXIT is held at Petrovaradin Fortress in Novi Sad with about 200,000 visitors and 10,000 artists performing at more than 40 stages.

Novi Sad is the 2nd largest city in Serbia and the capital city of Vojvodina province. It is located on the banks of the Danube river and it represents one of the main scientific and cultural centers in the region for decades, with more than 300,000 inhabitants. Novi Sad has been named by the European Union: European Capital of Culture for 2022. The city is also home of EXIT – one of the major European music festivals, founded in 2000 in the University Park as a student movement. EXIT is held at Petrovaradin Fortress in Novi Sad with about 200,000 visitors and 10,000 artists performing at more than 40 stages.

Novi Sad exhibits a vibrant ICT ecosystem with the presence of the world’s leading companies such as Continental, 3Lateral, and Schneider Electric. The city is home to the Faculty of Technical Sciences, part of the University of Novi Sad – one of the most prestigious higher education institutions in the country in the IT domain, with more than 1,200 employees and 12,000 students.

Novi Sad exhibits a vibrant ICT ecosystem with the presence of the world’s leading companies such as Continental, 3Lateral, and Schneider Electric. The city is home to the Faculty of Technical Sciences, part of the University of Novi Sad – one of the most prestigious higher education institutions in the country in the IT domain, with more than 1,200 employees and 12,000 students.

Abbreviations

- ICT = Information Communication Technologies

- Wi-Fi = Wireless Fidelity

- LTE = Long Term Evolution

- LTE-M = LTE-MTC [Machine Type Communication]

- LTE-TDD = LTE - Time Division Duplex

- LTE-FDD = LTE - Frequency Division Duplex

- mMTC = massive Machine Type Communications

- RPi = Raspberry Pi

- GPS = Global Positioning System

- GPRS = General packet Radio Service

- EGPRS = Enhanced GPRS

- 3GPP Rel-13 = 3rd Generation Partnership Project, Release 13

- GNSS = Global Navigation Satellite System

- USB = Universal Serial Bus

- UART = Universal Asynchronous Receiver-Transmitter

- I2C = Inter Integrated Circuit

- SPI = Serial Peripheral Interface

- UL/DL = Upload/Download

- ML = Machine Learning

- DL = Deep Learning

Use Case

UNS experiment will support the other two pilots (Malta and Trento) by providing a specific small scale use-case and data for further processing. We will perform crowd classification based on drone and ground recordings, as described in the experimental setup. Data collection will be performed in the form of staged recordings designed so as to replicate the crowd-monitoring events of interest for training. The classes of crowds that will be simulated are the following:

Neutral – the gathered people are standing and chatting;

Neutral – the gathered people are standing and chatting;- Party – people are dancing, singing, laughing, etc.

- Anomaly – e.g., people are running away from a dangerous situation or from some suspicious behavior in general.

Recordings will be performed in squares of the UNS campus.

Why Drones?

In the context of MARVEL project, the UNS team will be working on evaluating the MARVEL methodologies using drones, cameras and microphones, for the monitoring of large space public events, such as the EXIT music festival. The main motivation for this combination of technologies comes from the fact that large events in the open often lack appropriate infrastructure for the observation of the crowd’s behavior. For example, fixed positions of street cameras could only provide side views, which might not be enough to detect an event which requires intervention in crowded areas (that can, for example, occur in the middle of crowds). In addition, there might be altogether a complete lack of the necessary infrastructure, which is often the case for events that occur outside main city areas.

The advantage of drone-based technologies is evident: drones can quickly change position and fly over the main event areas to check if any problem has occurred. If a problem is spotted, local inference can be performed at the edge device (drone) or the fog location (local server) alongside the information obtained from the data captured on the ground. Such information can be sent along with the event location to the authorities or emergency crews. Drone communication with the fog layer can be done via Wi-Fi or LTE-M wireless connections. We plan to investigate the application of both technologies for their different capabilities, such as coverage areas and supported data rates.

- Experimental setup

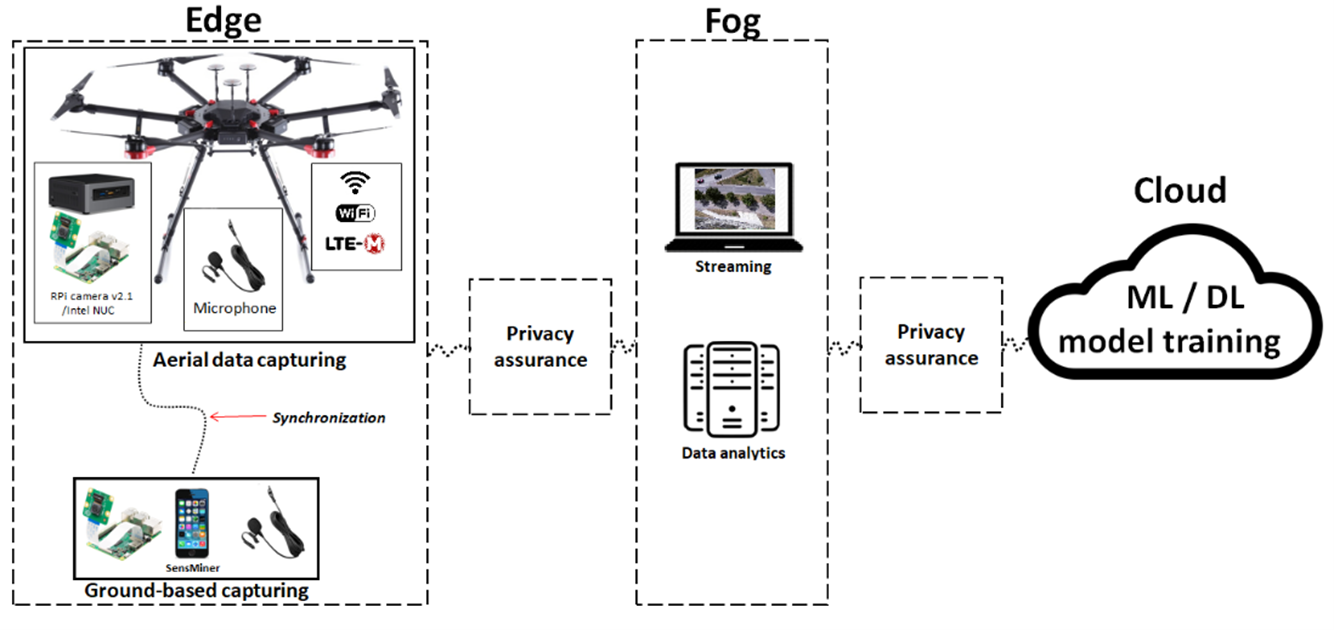

The experiment will follow Edge-to-Fog-to-Cloud ubiquitous computing framework that enables multi-modal perception and intelligence for audio-visual scene recognition, event detection in a smart city environment. The edge layer of the UNS experiment will involve data capturing devices mounted on the drone and at the ground. RPi v2.1 camera, 1-2 IFAG MEMS microphones and INTEL NUC Mini PC will be mounted on the drone, whereas ground-based supporting hardware will involve the same RPi camera but also several more microphone boards for audio-based event localization. We will also employ sensMiner Android application (AUD) for complementary audio data with GPS tags, annotated on-site and in real-time through the app. The set of staged events and the corresponding tags will be defined beforehand to facilitate the annotations process. Next, the audio-visual streams will be transmitted to a laptop or a tablet using Wi-Fi for real-time views, whereas analytics will be performed in the local servers at the Fog layer. Regarding wireless transmission, UNS will also explore LTE-M technology for its enhanced coverage and mobility capabilities.

The experiment will follow Edge-to-Fog-to-Cloud ubiquitous computing framework that enables multi-modal perception and intelligence for audio-visual scene recognition, event detection in a smart city environment. The edge layer of the UNS experiment will involve data capturing devices mounted on the drone and at the ground. RPi v2.1 camera, 1-2 IFAG MEMS microphones and INTEL NUC Mini PC will be mounted on the drone, whereas ground-based supporting hardware will involve the same RPi camera but also several more microphone boards for audio-based event localization. We will also employ sensMiner Android application (AUD) for complementary audio data with GPS tags, annotated on-site and in real-time through the app. The set of staged events and the corresponding tags will be defined beforehand to facilitate the annotations process. Next, the audio-visual streams will be transmitted to a laptop or a tablet using Wi-Fi for real-time views, whereas analytics will be performed in the local servers at the Fog layer. Regarding wireless transmission, UNS will also explore LTE-M technology for its enhanced coverage and mobility capabilities.

- Wireless Connectivity

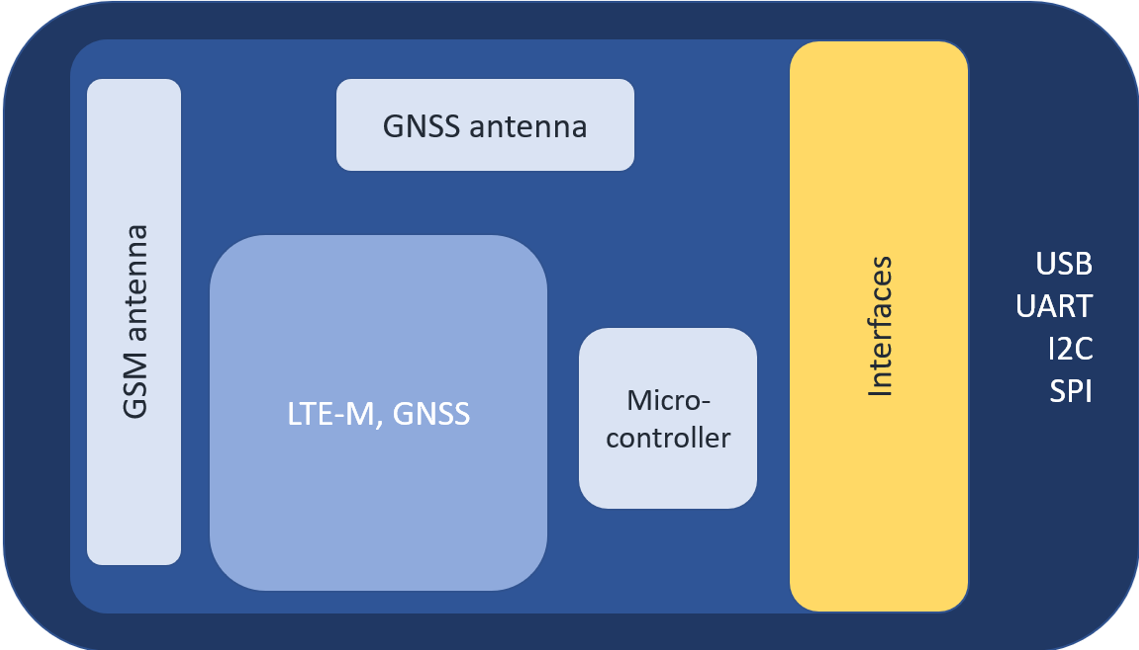

So far, we have carried out experiments using the experimental setup shown above for video streaming using WiFi connection and we are also exploring the possibility to complement the WiFi connection with LTE-M. The LTE-M edge node, shown here, provides long-range low-power data connectivity on LTE-TDD/LTE-FDD/GPRS/EGPRS networks, and supports half-duplex operation in LTE networks based on LTE-M. LTE-M is released in 2016. within 3GPP Rel-13 (with some improvements in later releases). It is meant to support low-power IoT and mMTC communications mainly.

So far, we have carried out experiments using the experimental setup shown above for video streaming using WiFi connection and we are also exploring the possibility to complement the WiFi connection with LTE-M. The LTE-M edge node, shown here, provides long-range low-power data connectivity on LTE-TDD/LTE-FDD/GPRS/EGPRS networks, and supports half-duplex operation in LTE networks based on LTE-M. LTE-M is released in 2016. within 3GPP Rel-13 (with some improvements in later releases). It is meant to support low-power IoT and mMTC communications mainly.

The localization of the drone (by GPS coordinates) is enabled by GNSS module and antenna supporting GPS. Interfacing with RaspberryPi on the drone is possible by USB (as virtual COM port) or UART. I2C and SPI interfacing is possible if needed. It supports all major network interfaces (e.g. FTP(S), HTTP(S), UDP, TCP, SSL) Theoretical maximal UL/DL datarates are 375 kbps. It remains to be investigated what type of streams are possible to transfer for batch and real-time applications and also at what datarates since LTE-M has not yet been released commercially in Serbia.

Having read our pilot case, we’d like to invite you to share your thoughts. What other benefits or challenges do you see in crowd behavior observation during big events for example or in other occasions? Please reach out using our contact form. You can also find us on Twitter and LinkedIn.

Signed by: the UNS team at the Faculty of Technical Sciences, University of Novi Sad

Menu

- Home

- About

- Experimentation

- Knowledge Hub

- ContactResults

- News & Events

- Contact

Funding

This project has received funding from the European Union’s Horizon 2020 Research and Innovation program under grant agreement No 957337. The website reflects only the view of the author(s) and the Commission is not responsible for any use that may be made of the information it contains.

Neutral – the gathered people are standing and chatting;

Neutral – the gathered people are standing and chatting;